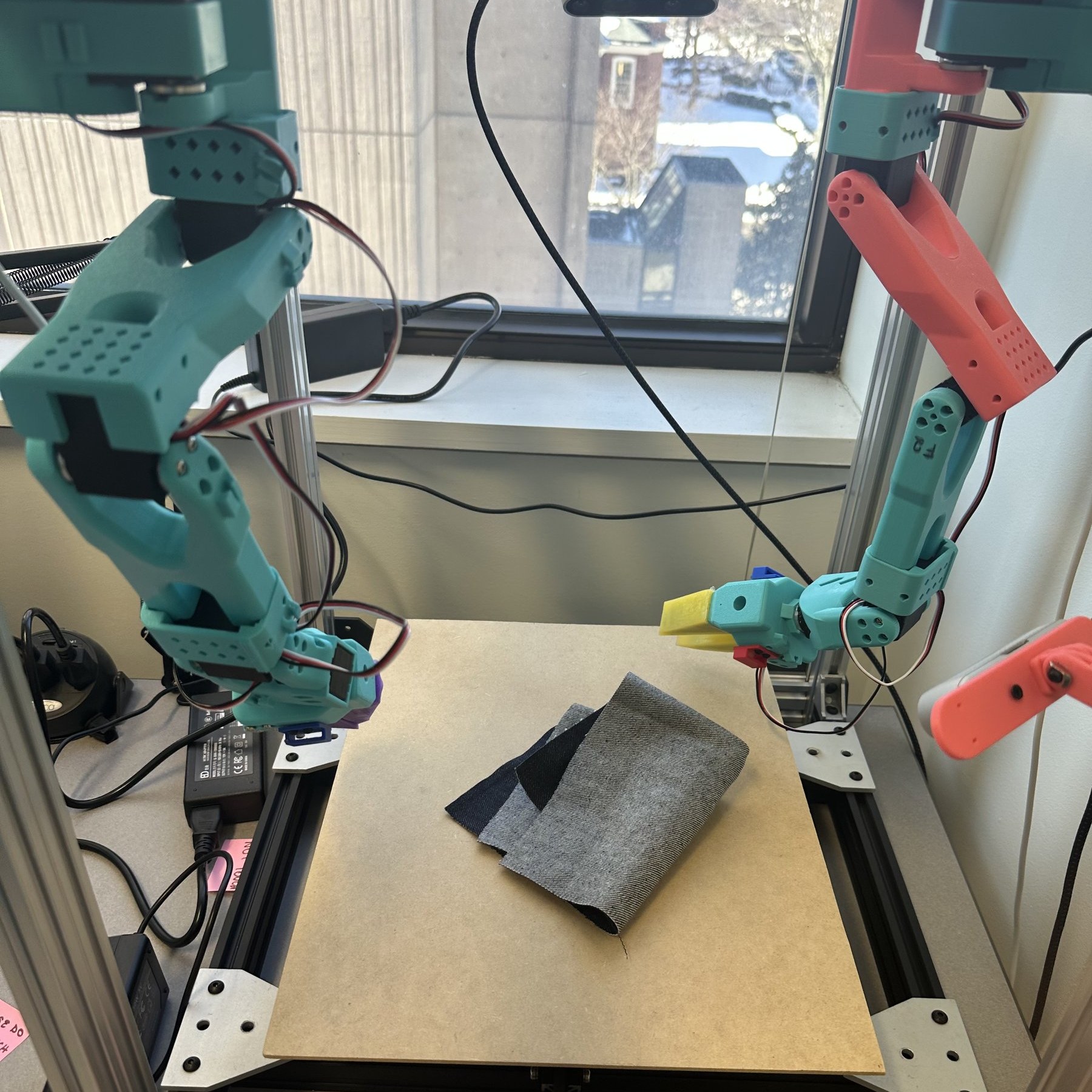

The Sew Unit

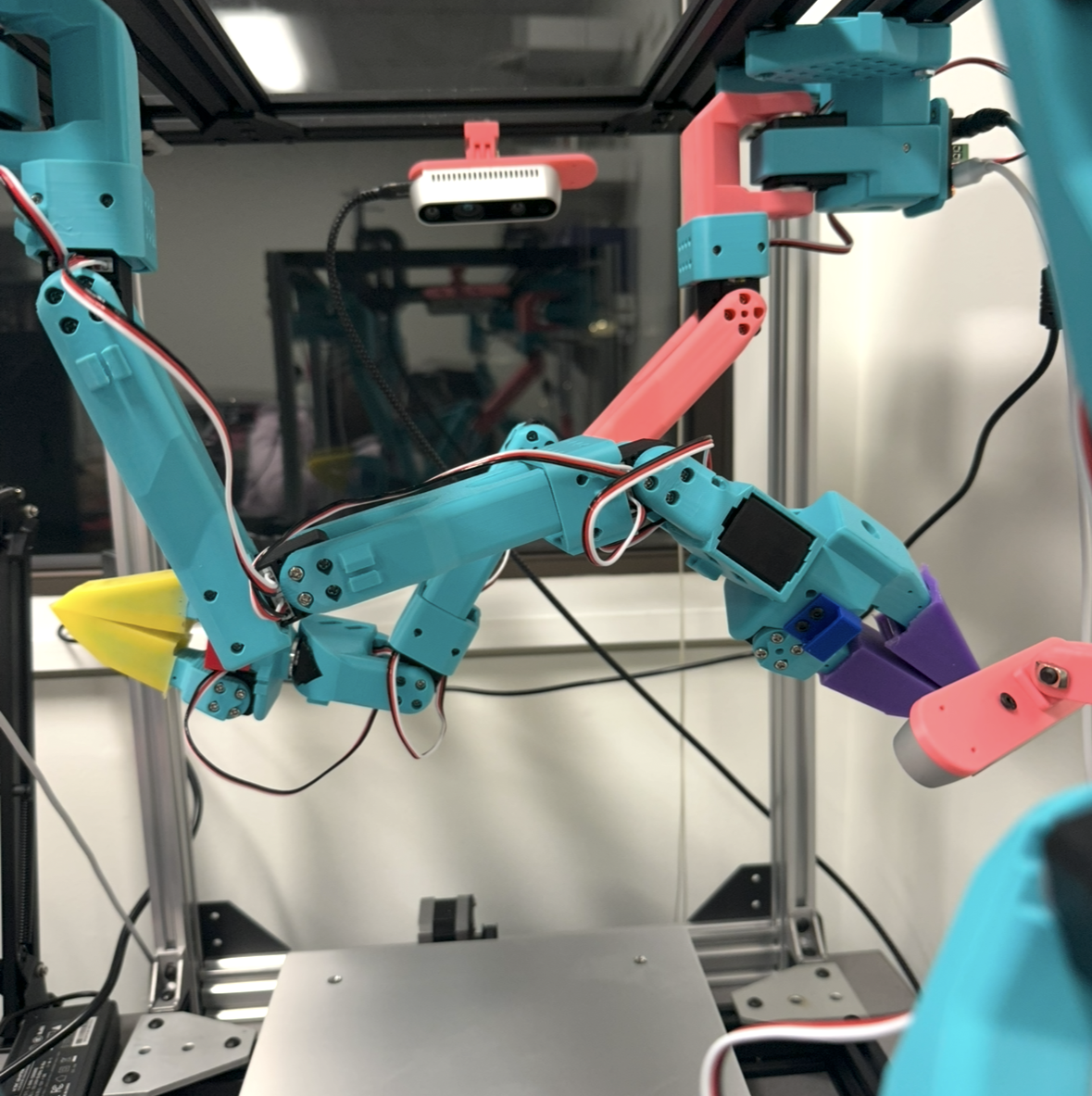

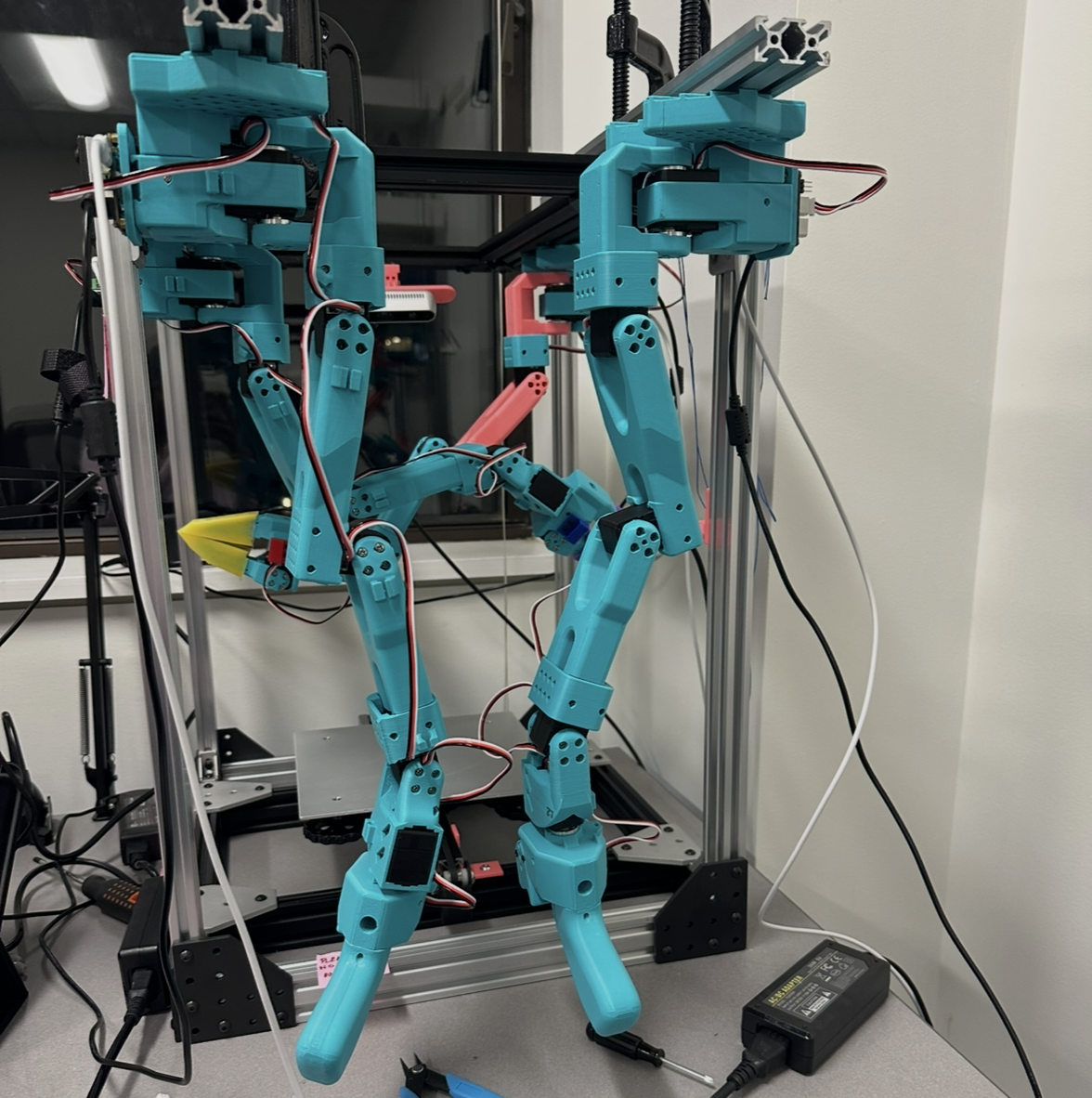

A bimanual cloth manipulation platform I designed and built from scratch in grad school. Custom aluminum extrusion frame, two inverted SO-101 arms, ROS2/MoveIt motion planning, and a leader-follower teleoperation system for data collection.

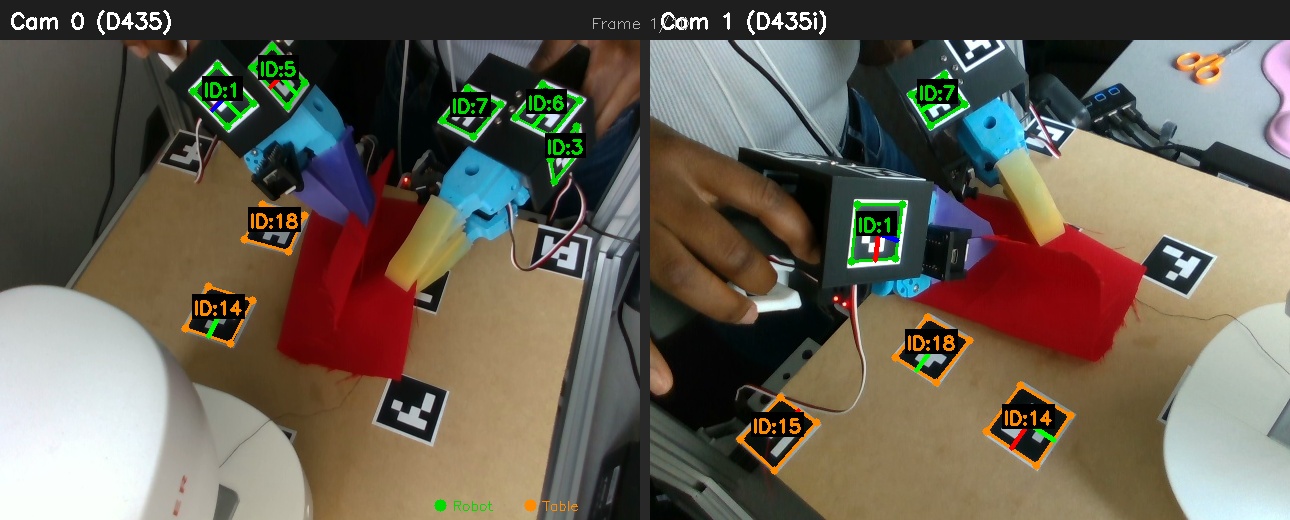

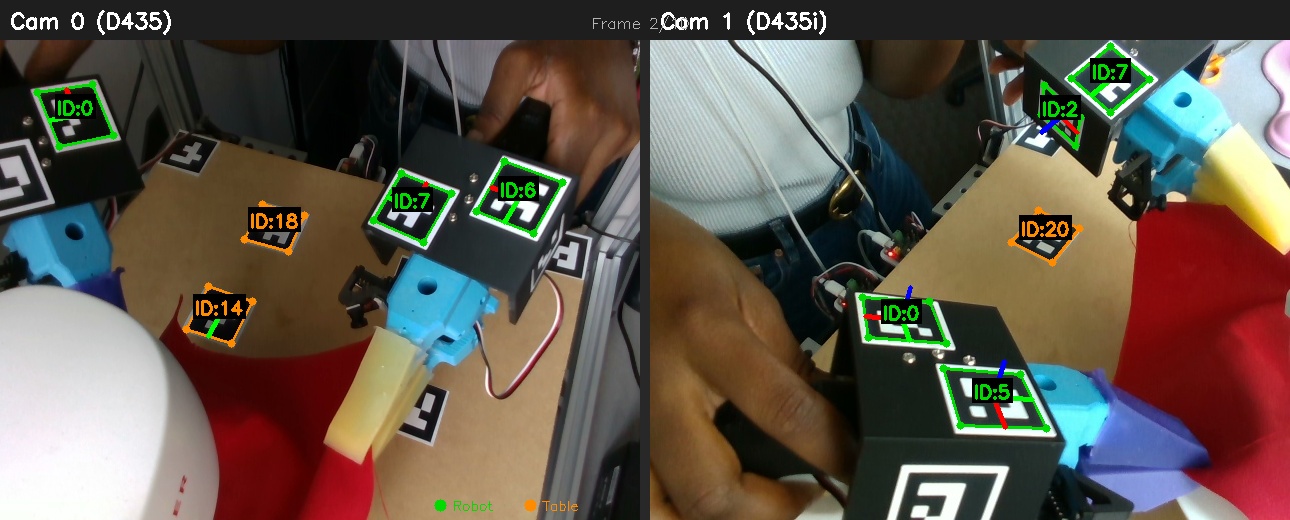

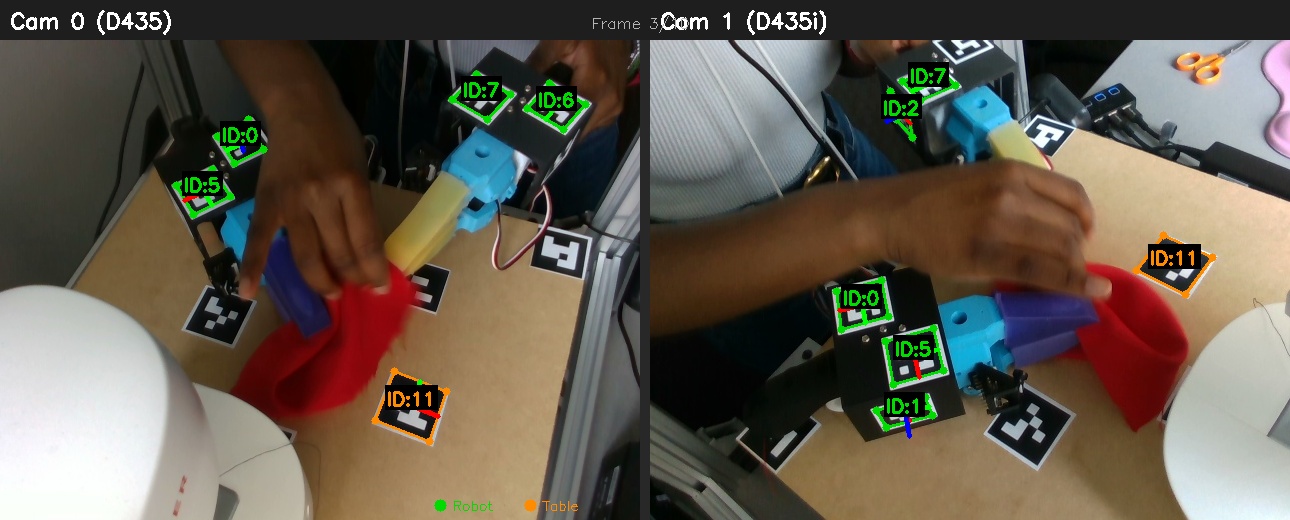

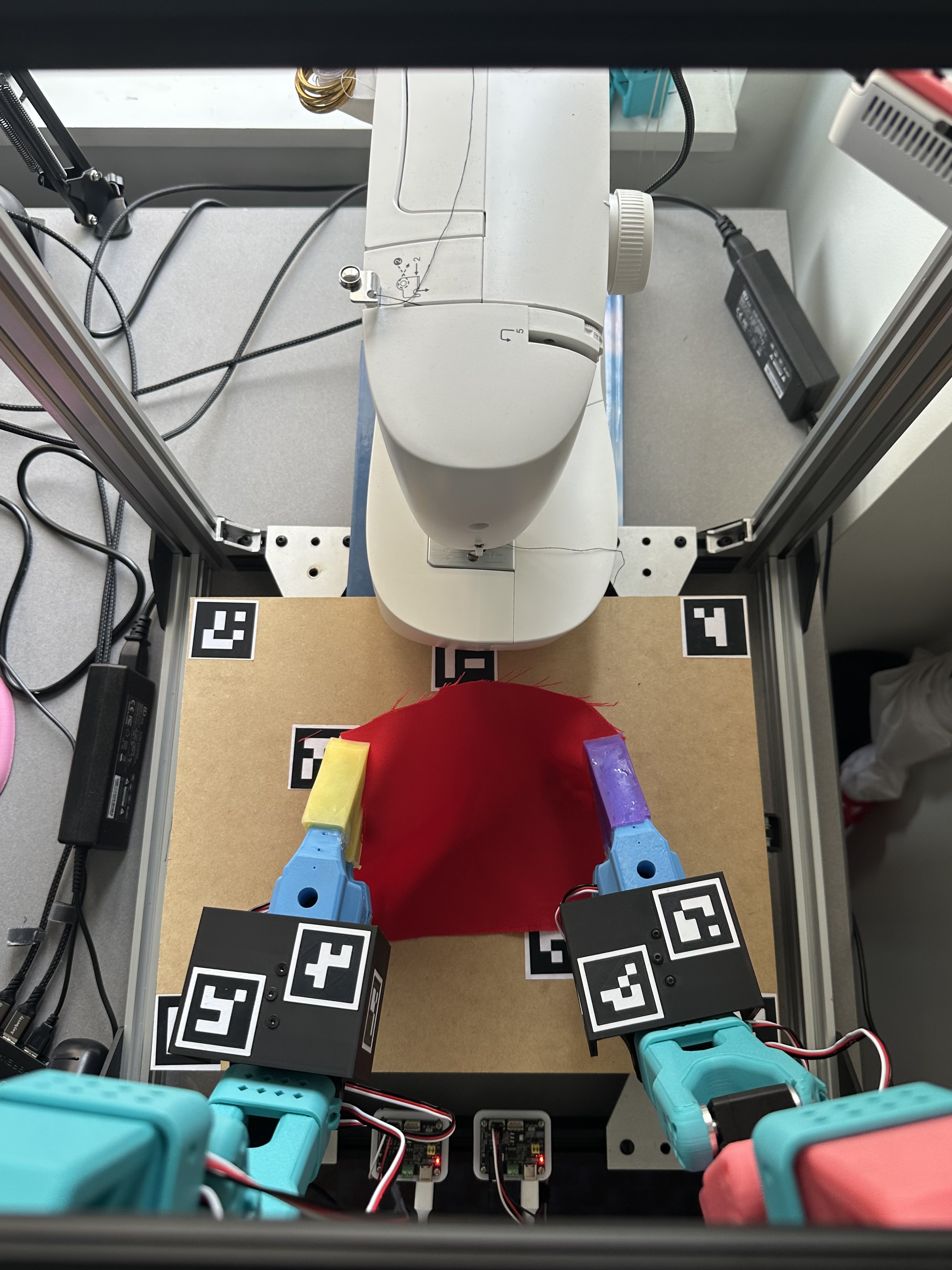

Handheld Data Collection Grippers

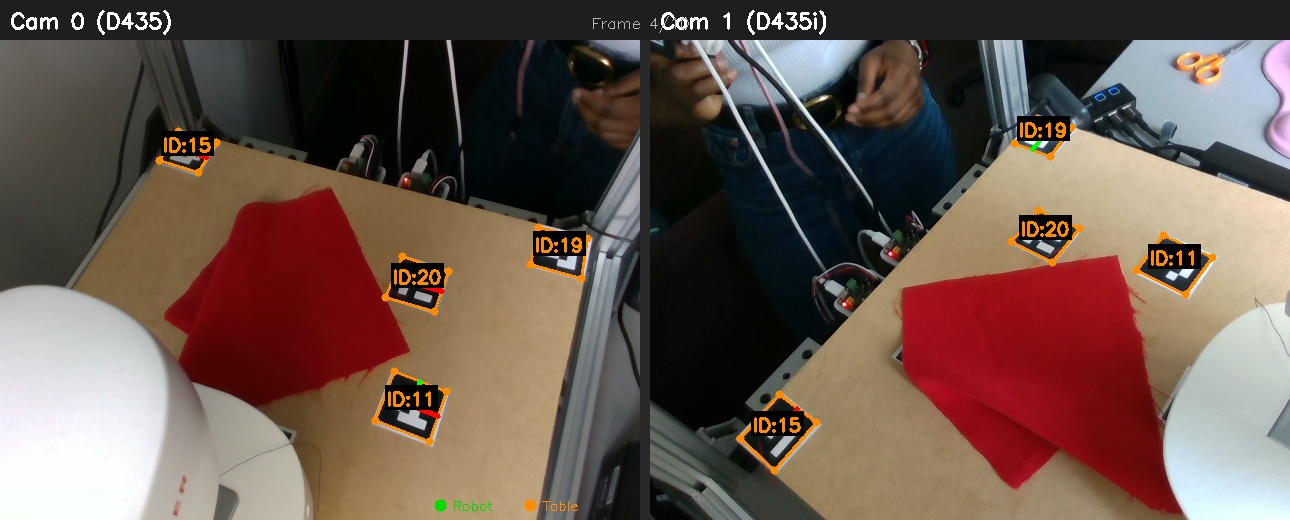

Current setup containing handheld grippers for data collection within the cell. Stereo ArUco detection from two RealSense cameras. Green markers are mounted on handheld data collection grippers; orange markers on the table for calibration.

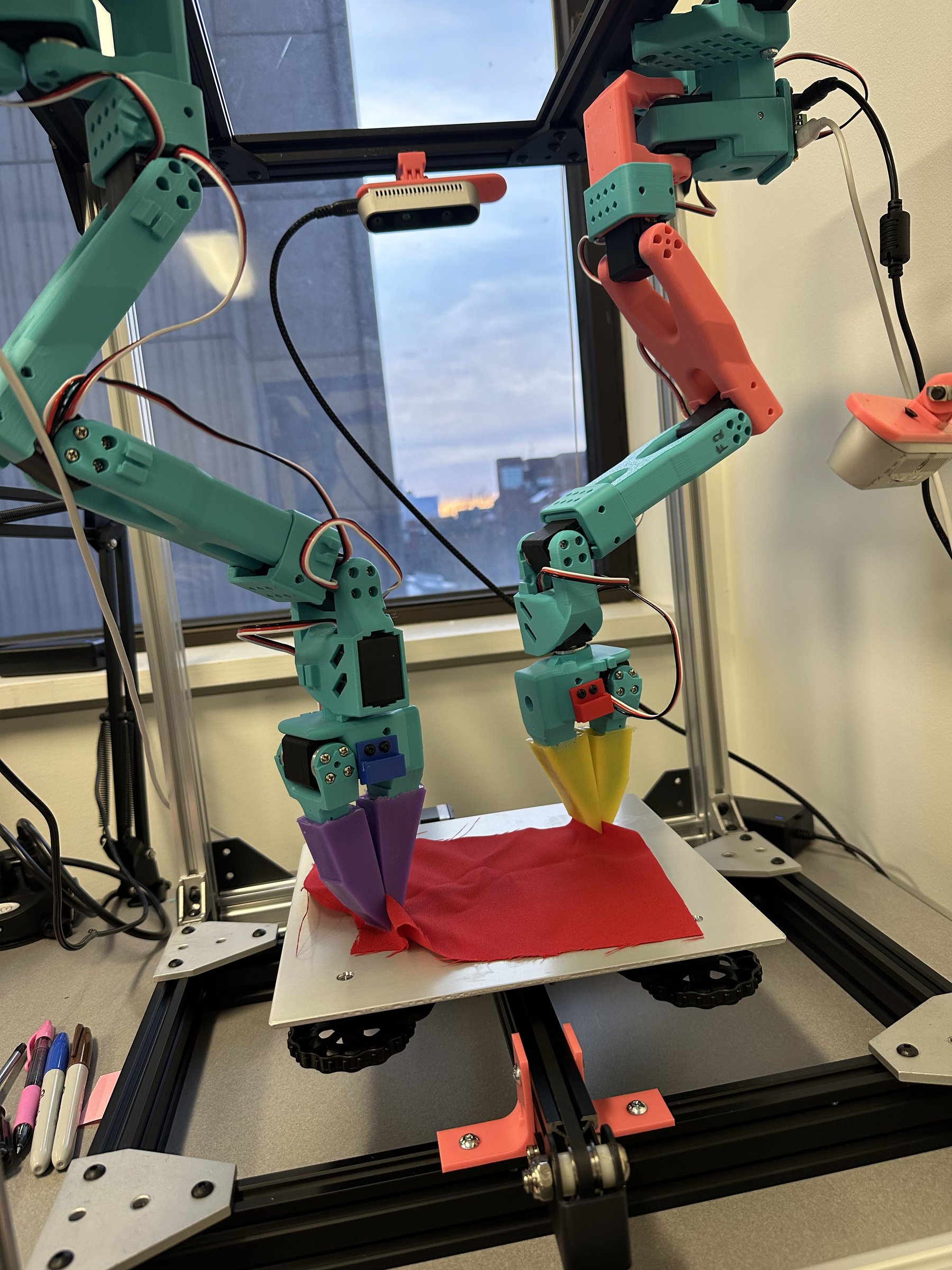

Sewing Machine + Robot Arms Setup

Hardware

- Arms: Two SO-101 arms from the HuggingFace LeRobot platform, open-source research arms I adapted for inverted mounting. I didn't design the robots; the work was in the URDF modifications for flipped gravity compensation, joint limit tuning, and merging two independent controllers onto a single unified serial bus.

- Workspace: Ender 3 printer bed, flat, rigid, and replaceable

- Servos: STS/SCS series servo motors, 12 total (6 per arm)

- Controllers: Unified serial controller managing both arms over a single bus, built after two independent controllers kept causing port conflicts

Motion Planning & Digital Twin

ROS2 and MoveIt with custom URDF configurations. The inverted mounting orientation required reworking joint limits and gravity compensation; small sign errors in the URDF produce impossible trajectories. The same trajectory recorded via teleoperation can be played back on the real robot and verified against its digital twin.

Teleoperation & Data Collection with Leader Arms

The primary data collection method is leader-follower teleoperation. The leader arms share the same morphology as the follower arms, so joint angles map directly with no IK guessing. What you do with the leader is exactly what the follower does.

Perception & Data Collection

The platform collects synchronized RGB-D video across four cameras while recording joint states at configurable rate (10–30 Hz). Turning that raw data into 3D particle tracks that dynamics models can train on required building a complete perception pipeline from scratch.

Camera setup: 4× Intel RealSense cameras (D435i and D405) surrounding the workspace, calibrated individually with ChAruco boards (DICT_4X4_50, 5×4, 40mm squares). Best stereo pair achieved 0.68 RMS reprojection error. Automated hand-eye calibration maps each camera into robot-base coordinates.

Cloth and gripper segmentation: Object detection and segmentation using foundation models (YOLOv5), hand-annotated with labelImg, with separate detectors per fabric type (black, denim, red), each trained for ~500 epochs on train/val/test splits. Multi-camera RGB-D point clouds are color-coded by source camera for registration debugging.

Gripper tracking: Started with HSV color thresholding. Replaced with CoTracker 3 (Meta’s learned point tracker) for robustness under changing lab lighting and partial occlusion, reliable across all 11 collected trajectories.

Dataset: 11 annotated bimanual manipulation trajectories across single-arm and dual-arm motions, three fabric types (black, denim, red), with synchronized joint states and multi-camera RGB-D.

What I Learned

Inverted mounting is not trivial. Gravity compensation and joint limits all flip. Small sign errors cause the planner to propose impossible trajectories. Found this out through hours of debugging URDF transforms.

Servo calibration across 12 motors takes days. EEPROM corruption on one motor requires systematic bus isolation to diagnose without taking everything apart. I built tooling to scan individual motors on the live bus.

The unified controller was born from frustration. Two independent serial controllers caused port conflicts and timing issues. Merging them onto a single bus with shared timing fixed both problems and simplified the data pipeline.